In general, it’s a good thing when cluster-based platforms, like Elasticsearch, are able to keep operating even if some parts of the cluster become unreachable. But what happens if the unreachable nodes haven’t actually crashed, but have simply been cut off from the rest of the cluster due to a problem like a network failure?

In that case, the cluster’s determination to keep running can lead to a problem known as ‘Elasticsearch split brain’. Split brain occurs when a cluster splits into two or more isolated segments, with each believing – incorrectly – that the others have gone down.

Split brain in Elasticsearch can lead to major issues, including data loss and downtime. Hence the importance of knowing how to prevent split brain risks, how to detect a split brain situation if it arises, and how to fix the issue quickly, before your Elasticsearch cluster experiences data loss or other severe issues.

Read on for guidance on all of the above as we explain everything Elasticsearch admins need to know about split brain causes, prevention, and recovery.

What is split brain in Elasticsearch?

Split brain is the division of an Elasticsearch cluster into two or more independent sub-clusters. The sub-clusters are not able to communicate with each other, so they end up operating in isolation. Each sub-cluster will also appoint its own master node and cease trying to communicate with the cluster’s original master node or nodes (if the latter are not part of the sub-cluster).

.png)

Root causes of Elasticsearch split brain in distributed environments

Split brain in Elasticsearch occurs when a situation arises in which one part of the cluster is no longer able to reach other parts. There are several potential underlying root causes that can lead to this scenario:

- Network failure: The most common root cause of Elasticsearch split brain is networking problems that cause some nodes not to be able to send and receive data from other nodes. The result is the division of the Elasticsearch cluster into independent “network partitions,” each running in isolation from the others.

- Hardware issues: Hardware problems, like a server that crashes due to a temporary power outage, can cause cluster splitting if they result in a master node being down or unreachable. When this happens, other data nodes will appoint a new master.

- Garbage collection pauses: While garbage collection is normal, it can trigger split brain scenarios in cases where the process takes so long that it causes a master node to stop responding temporarily. Other data nodes will then elect a new master, leading to cluster fracturing.

- Misconfigured master node requirements: Setting the minimum number of master-eligible nodes too low can cause multiple nodes to appoint themselves as masters, especially if the original master becomes temporarily unavailable. The result is the splitting of the cluster into sub-clusters, each governed by its own master node.

Using different Elasticsearch versions: The process for electing node discovery and master node designation can vary between Elasticsearch versions. As a result, deploying different versions of Elasticsearch can lead to situations where some nodes appoint different masters than others, causing cluster fracturing.

Consequences of Elasticsearch split brain for data integrity and availability

When split brain occurs in Elasticsearch, the sub-clusters can generally start communicating again once they are able to reach one another. They will also reach a consensus over which master node should be the legitimate one, and will abandon any “false” masters that were governing sub-clusters independently during the split brain state.

So, you might not think that split brain is a big deal, given that Elasticsearch can typically pull itself out of a split brain state automatically.

In reality, however, significant problems can arise as a result of a split brain condition, even when it resolves itself:

- Data loss: Split brain can cause permanent data loss in the event that different sub-clusters read and write data independently during the time that they are isolated from each other. When they rejoin each other, data can be lost in the process of reconciling the records maintained by each sub-cluster.

- Data inconsistency: Even if no data is permanently lost, information can become inconsistent during the reconciliation process. For example, indexing data could vary between sub-clusters, leading to inconsistent search results when users query Elasticsearch.

- Downtime: The process of confirming a master and reintegrating sub-clusters takes time. During this period, the Elasticsearch cluster may be unavailable, leading to service downtime.

Generally speaking, the risks of data integrity problems and downtime increase the longer a split brain condition goes on. Having a split brain for just a few seconds may not be a big deal, especially if few data write events occur during that period. The risks of major problems start to become significant when the split brain state lasts for at least several minutes.

To make matters worse, external Elasticsearch monitoring tools may “think” that a cluster is healthy during or following a split brain state because the cluster’s nodes may respond to health checks, and the cluster might also continue to process queries. But in reality, there could be data integrity issues under the hood, and query processing may be inconsistent.

Strategies to detect Elasticsearch split brain before data loss occurs

To prevent Elasticsearch data loss and downtime, it’s critical to detect split brain issues as rapidly as possible. The best way to do this is to run automated checks that verify the following data:

- Cluster state changes: Monitor cluster_state for sudden changes, such as a change in the number of master nodes.

- Cluster composition: Poll the /_nodes endpoint to verify that all nodes report the same master node and total cluster size. If they don’t, it means there’s an inconsistency that is likely causing a split brain state.

- Unassigned shards: Split brain often leads to a spike in unassigned Elasticsearch shards because the cluster will struggle to assign shards during an inconsistent configuration state. Monitoring the unassigned_shards count can clue you into this scenario.

- Network connectivity: Monitoring for network health problems, like high rates of packet loss or high latency during communications between nodes, can provide early warning about Elasticsearch split brain risks. Networking issues like these don’t necessarily mean that split brain has occurred, but they are a leading cause of split brain.

Ideally, your monitoring and observability tools will not only check for anomalies like those described above, but also generate alerts so that admins can respond quickly. When it comes to Elasticsearch split brain, every second matters for achieving effective recovery without data loss.

How to recover from an Elasticsearch split brain incident safely

As we said, Elasticsearch clusters can typically resolve split brain incidents automatically in the sense that sub-clusters will transform back into a single cluster governed by a consistent master node – but this doesn’t mean that the split brain problem is solved.

On the contrary, manual intervention is often necessary to ensure that the newly reconstituted cluster operates normally and does not experience data loss. Key manual intervention steps include:

- Pausing all ingestion to prevent any further data changes until the split brain is fully resolved.

- Reviewing the existing state of Elasticsearch data to identify which sub-cluster’s records are the most up-to-date.

- Restoring only the sub-cluster whose records are most up-to-date.

- Allowing other nodes to rejoin the cluster after the initial subcluster (mentioned in step 3) is fully operational.

This process works if you can afford to restore just one sub-cluster’s data and discard the records of other node clusters. But what if multiple sub-clusters have data that you need to retain, and the data is divergent between the sub-clusters? In that case, you’d typically have to export the data from each sub-cluster using a tool like Elasticsearch Dump, then merge it into a new, single index. After that, you can import the data back into your cluster.

How to prevent Elasticsearch split brain in Kubernetes and cloud deployments

Better than having to recover from a split brain state is preventing one in the first place. The following best practices can help in this regard, especially if you run Elasticsearch in a distributed environment like Kubernetes or a public cloud:

- Run nodes on the same network: Where feasible, keeping all Elasticsearch nodes on the same local network or subnet can help prevent split brain because it reduces the chances of a networking issue triggering a breakdown in communications between nodes.

- Deploy at least three dedicated master nodes: Configuring Elasticsearch to require at least three master nodes mitigates split brain risks because it prevents scenarios where one or two master-eligible nodes become disconnected from each other, then elect themselves as new masters. They would just drop from the cluster in that case rather than trying to create a new, independent sub-cluster.

- Keep Elasticsearch master nodes on different Kubernetes nodes: If master nodes run as Kubernetes pods, configure pod affinity rules to prevent more than one master node Pod from running on the same Kubernetes nodes. This is helpful because it reduces the risk of having multiple Elasticsearch master nodes fail at the same time (which would happen if they reside on the same Kubernetes node and that node goes down).

- Keep Elasticsearch versions consistent: As noted above, running the same version of Elasticsearch across nodes avoids the risk that varying master election processes will trigger a split brain condition.

Operational challenges of managing Elasticsearch split brain at scale

The larger the scale of your Elasticsearch cluster, the harder it typically is to detect and respond to split brain issues. This is because more Elasticsearch nodes mean a higher chance that some nodes will stop communicating with others. In addition, larger clusters often store more data, and the more data you have to work with, the trickier it usually is to reconcile data safely following split brain.

There are no simple solutions to these challenges. However, automation plays an important role in managing Elasticsearch split brain at scale. Specifically, admins should automate the health checks we described above to detect split brain issues quickly. Having scripts on hand for exporting, merging, and re-importing data into Elasticsearch can also help to ensure a speedy recovery when dealing with large volumes of divergent data.

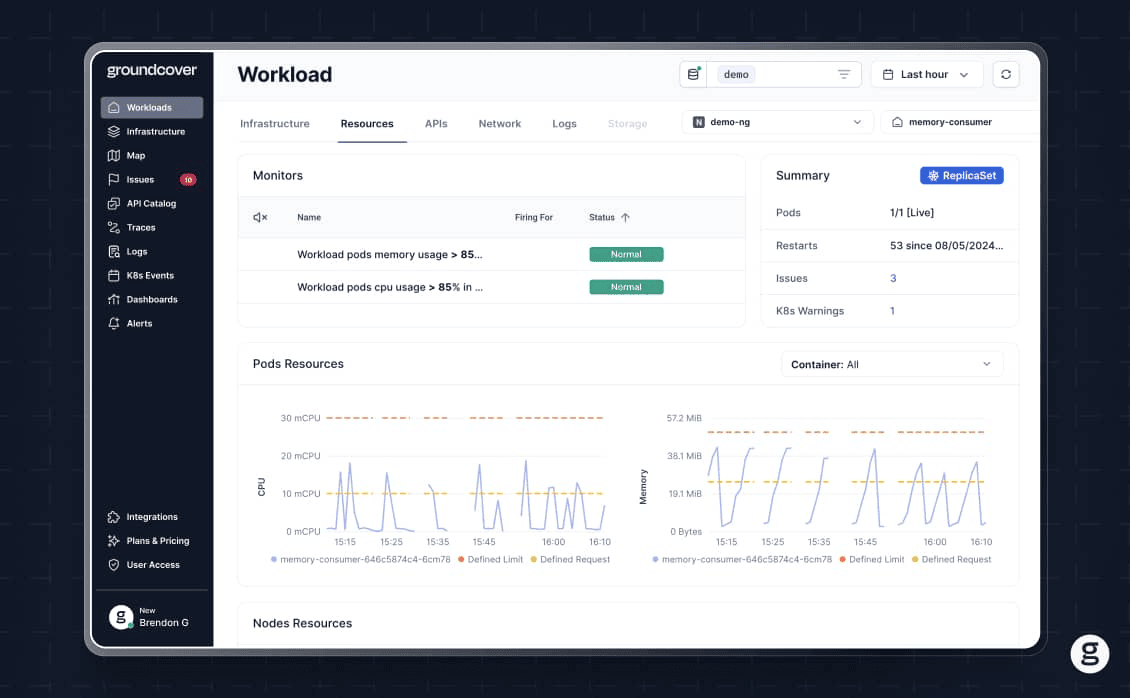

Real-time cluster visibility to prevent Elasticsearch split brain with groundcover

By delivering deep, granular visibility into the state of your entire stack – including not just Elasticsearch nodes but also the Kubernetes cluster or cloud infrastructure that hosts them, and the network infrastructure over which they communicate – groundcover clues admins in quickly to split brain risks so they can take action before experiencing data loss or major downtime.

Just as important, groundcover allows teams to validate that their Elasticsearch clusters are up and running normally following a split brain recovery. It does this by collecting observability data that goes much deeper than simply checking whether nodes are up; with groundcover, you get extensive visibility into what is actually happening on each node.

Getting ahead of split brain risks in Elasticsearch

You can’t guarantee that networking, hardware, or configuration issues won’t trigger a split brain event in your Elasticsearch environment. But you can take steps to minimize the risk of this happening. Just as important, you can monitor continuously to identify split brain conditions rapidly when they do occur, and have automations in place to help restore data safely.

.svg)